Workflows: how do researchers do their research? how does collaboration work?

Workflows: how do researchers do their research? how does collaboration work?

As part of the Transforming Musicology project, we have tried to examine not just the academic and the technological aspects of musicological research, but also the way in which such work is carried out. Naturally, there is a great deal of variation in the procedures, techniques and tools that people use, but understanding them is important, especially as musicology undergoes the technological transformation that gives our project its title. Where individual, specialised work is needed, it should be accommodated, but where commonalities do exist, they present opportunities for tool and data sharing as well as avenues for supporting collaborative investigation. Also important, as more parts of our research may involve automated methods is the ability to be clear about where they have been used, and what versions of tools and corpora have been consulted.

Our research into these ways of working was led by Terhi Nurmikko-Fuller at the Oxford e-Research Centre, working with Kevin Page and Richard Lewis. By studying procedures not only in our main research strands, but also in the mini projects, we hoped to see as wide a variety of approaches and materials as possible. Details of the common building blocks found and a discussion of some possible technical options to support those can be found in Terhi Nurmikko-Fuller and Kevin Page, ‘A linked research network that is Transforming Musicology’, Proceedings of the 1st Workshop on Humanities in the Semantic Web (WHiSe), CEUR Workshop Proceedings 1608, Aachen (2016), pp. 73-8. Rather than repeating the contents of that paper, this blog post will explore some of the observations that it is based on, in particular, looking at the workflows as a whole, with less attention on their separate components. We would suggest that readers interested in tools that might support their own research first try the proceedings paper.

A Universal Workflow?

As we have suggested already, identifying a single workflow of activities that describes all musicology-related research is not our goal – it is neither attainable nor desirable to prescribe a standard model for humanistic research. Nonetheless, by limiting the family of interactions we consider and by making the elements in the workflow extremely generic, we can give a very high-level overview of some common ways of approaching a research corpus.

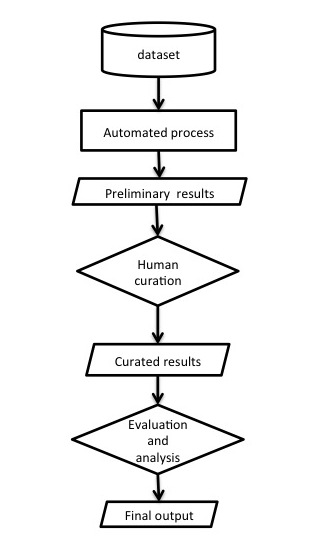

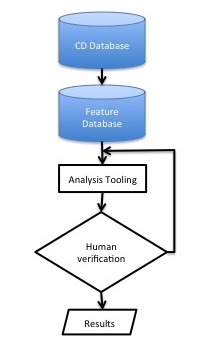

Figure 1 shows such a workflow, in which a corpus (the ‘dataset’ at the top) is in some way interrogated by an automated process (which might, for example, be selecting records by some search mechanism or analysing them). The results of this process are then presented to one or more researchers, who do something with them (described as ‘curation’ in the figure). The results of this can then be analysed and evaluated in a further process that may be wholly or partly automated, and that produces the final results of this investigation. This flow itself can be a component of larger workflows, and there may be opportunities for stages to repeat.

Figure 1. A very high-level view of a common workflow. Rectangles here (and in subsequent figures) represent activities, cylinders collections of data, diamonds activities that explicitly involve humans, and trapeziums represent products or outputs.

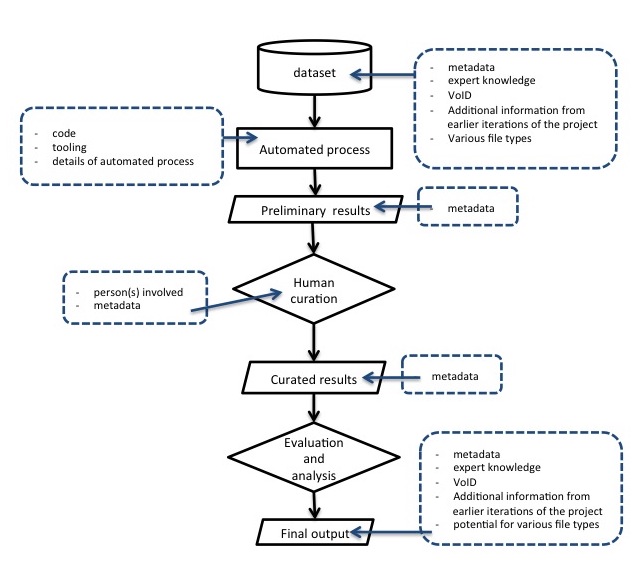

Having identified these steps in a generic, almost banal, form, we can start to identify the attributes of each – for instance what each adds to the information available and what would need to be recorded to best describe what has happened. In figure 2, steps are annotated with a summary of the extra information each carries. By beginning to document these, we can start to see how technologies and standards that help communicate these types of information might be deployed to make the process itself more transparent and sustainable.

Figure 2. The same workflow, annotated with the sorts of information added by each stage, included that needed to document the process.

This provides also a useful structure for describing the details of research to others, and indicating some of the specifics of each step, giving a level of detail that might otherwise be difficult for a simple overview.

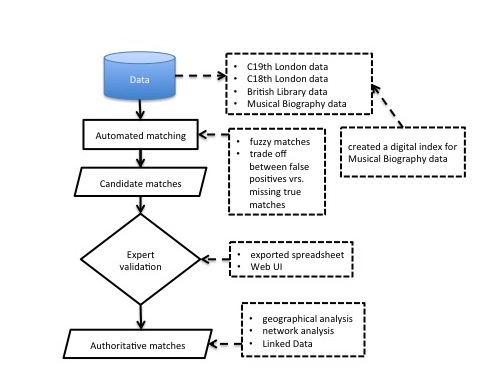

The inConcert mini project combined multiple databases of historical concert information, taking the information from older database formats and producing linked data. Figure 3 gives an overview of the process used by the inConcert mini project to ensure that the information coming from different sources could be united into a common structure.

Figure 3. A high-level workflow for the inConcert mini project, bringing together historical concert information from different databases.

Division of labour: how do roles change over a project?

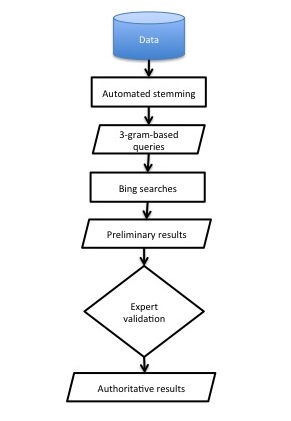

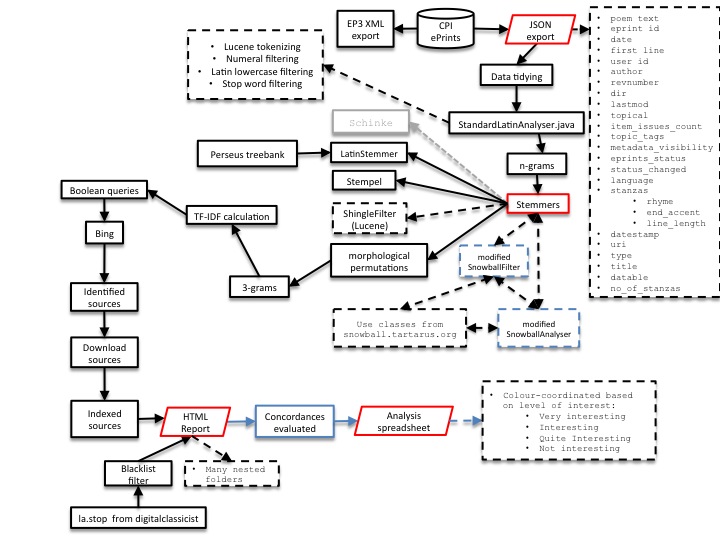

The process of documenting the elements of an instance of research activity can also make clearer the way in which time will be divided between different researchers and processes. Figure 4 shows a high-level workflow for the mini-project based in Southampton exploring sources for conductus texts through web queries using Bing. A more detailed workflow can be seen in figure 5.

Figure 4. A variant of the ‘universal’ model – a high-level workflow for the conductus mini-project.

Figure 5. A more specific and detailed view of the processes, data and technologies involved.

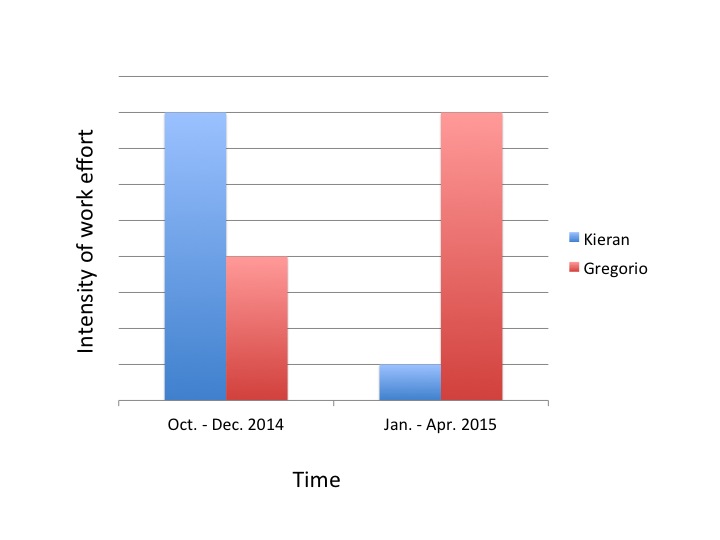

What these charts do show is that much of the musicologist’s expertise can only be deployed after much of the implementation is complete and searches executed. What this meant in practice is that although the time allocated by the researchers on this project was broadly similar, the points at which those allocations occurred were not (see figure 6). This is a common pattern in research using computational tools, and an important consideration for when funding considering researcher time on funded projects where such things must be pre-specified and are often assumed to be constant.

Figure 6. As is often the case, design and development of the tools, although it does require input from the domain specialists requires much more time from the technical researcher. Once the technical infrastructure is present, the time demands reverse.

Research using technology vs research on technology

Most of the workflows discussed here have few if any explicit feedback loops. This is partly because they describe operations that were specified in advance to apply for funding. Some of the research process required, for example to select methods, corpora and tools, had already occurred.

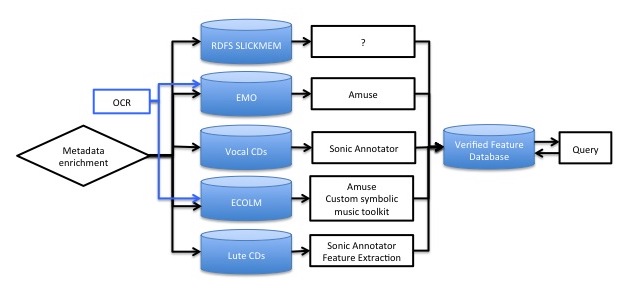

It is important, then, not to assume that these flow charts represent the totality of research. For example, the activity we chose to model for our early music research strand was a search tool that we are working on that operates transparently over recordings, tablature and score notations, and combining these with catalogue information published as linked data. This model (figure 7) summarises (at a very high level) both the process of extracting the relevant musical information and that of querying the database that arises out of that extraction process, and one can easily imagine a more detailed version of this informing tool design.

Figure 7. Creating and querying a multi-format database.

By contrast, figure 8 shows schematically the process of attempting to generate just one of the flows towards that database. In this case, we model more explicitly the research process required to turn a database of features created by running standard tools over a corpus of recordings into an index that represents features or feature combinations that might be musically meaningful for the materials that we are working with and, ideally, for which analogous features can be extracted from scores. For this version, both human involvement in the middle of the process and an unspecified number of repetitions must be modelled.

Figure 8. Modelling research into the necessary tools.

Workflows and repeatability

Some of the research studied is a single effort which, at its conclusion, produces results that are analysed or published, with no expectation of literally repeating the work, although we might still document the process to help others engaging in similar research. Some research, however, needed to be repeatable.

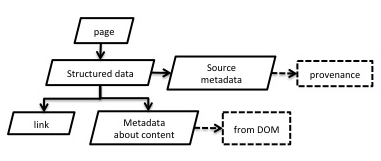

Ben Fields's exploration of online communities of commentators focussed around the genius.com website, where users share texts, usually song lyrics (usually rap and hip-hop), and provide explanatory annotations using a wiki-like interface. The data was extracted using a small piece of software called a spider, which travels around a web page, following links and recording the information that it finds. Many spiders, all working in parallel, can explore web pages quite quickly, and are useful when the underlying data of a site is not published as a single download.

Figure 9. A fully automated workflow for a web spider, constructed by Ben Fields to study users annotating lyrics on a wiki-like song-lyric-sharing website.

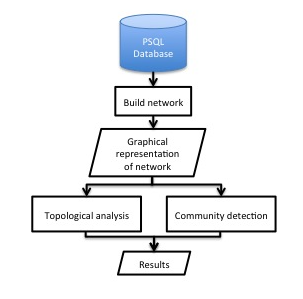

Figure 9 shows a workflow for the web spider itself. The information it gathers is written to a database, and the database itself is used in network analyses, trying to identify communities of users with common practices of annotation, and perhaps common musical tastes. Figure 10 shows a workflow for such an analysis. In this case, it is important that this workflow can be automated and reused, since the website it summarises is dynamic, and changes in ways that we might want to capture.

Figure 10. A second workflow, taking the data on user annotations to study the networks of users and their musical interests.

Similarly, other resources might give similarly-structured data. Having reusable software and clearly communicated workflows allows research to be more easily repeated in other contexts.

Appendix: extra diagrams

This blog post has summarised some of the results of our exploration of research workflows and, since not all of the diagrams fit smoothly into that narrative without overwhelming it, many have not been included. Below is a selection of some of the diagrams that did not fit.

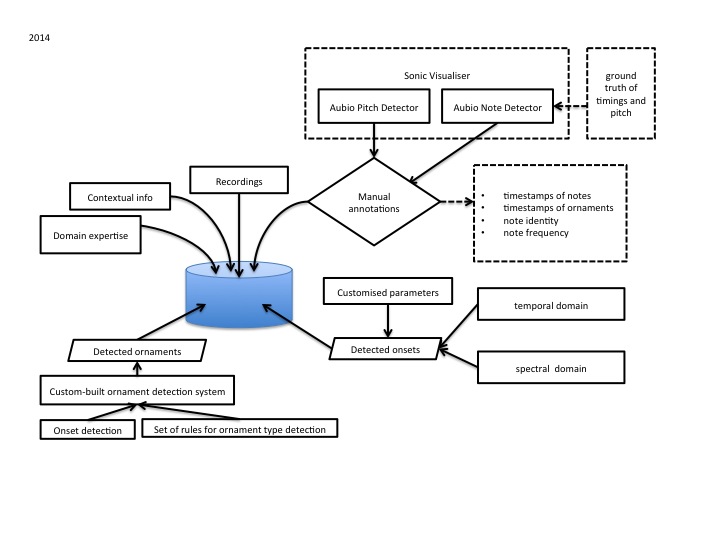

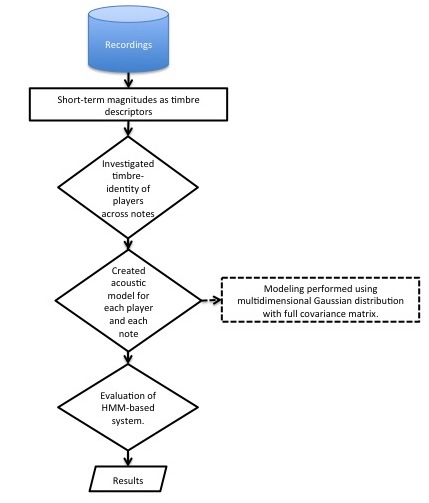

A diagram focussing on information capture from multiple sources, here used for analysing the differing use of ornamentation by a selection of Irish flute players.

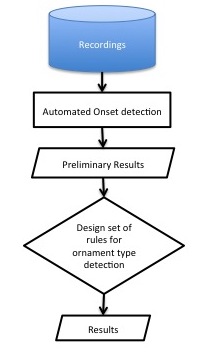

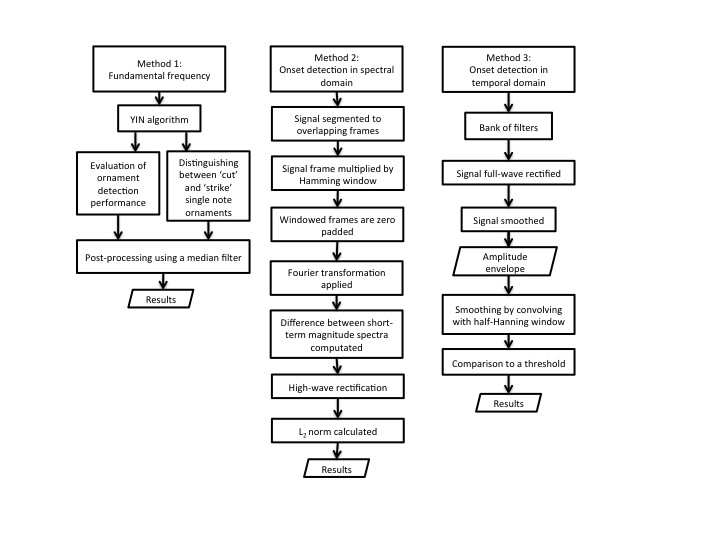

Three more-detailed views of workflows for analysing Irish flute ornamentation.

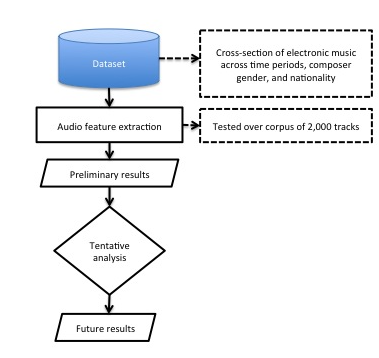

A schematic workflow for the mini project studying a corpus of electronic music using tools drawn from Music Information Retrieval. In this case, the diagram was drawn early on in the process, reflecting something of the planning of the project.

A schematic workflow for the mini project studying a corpus of electronic music using tools drawn from Music Information Retrieval. In this case, the diagram was drawn early on in the process, reflecting something of the planning of the project.